The sorry state of banking research

Economic academic research can be curious. In particular since the financial crisis, academics have focused on proving that free markets were inherently unstable and that government intervention was required to stabilise the economy.

While George Selgin incinerates a recent paper on Canadian private currency, I found three other recent papers that try too hard to convince us that markets aren’t perfect.

The first one, titled Short-termism Spillovers from the Financial Industry, attempts to demonstrate that large listed banks are subject to short-termism in order to meet quarterly earnings figures, and this that short-termism affects their behaviour towards their clients and in turn borrowers’ long-term investment policies. They conclude that short-termism is not optimal from an economic efficiency point of view.

In their words:

First, we find that lenders facing incentives to meet quarterly earnings benchmarks are more likely to extract material benefits from borrowers. Second, lenders with short-termism incentives push relatively high-quality borrowers into material covenant violations because these are precisely the borrowers from whom rents can be extracted. Because unhealthy borrowers are already selected for material covenant violations by lenders both with and without short-termism incentives, only relatively healthy borrowers are left to be targeted by incremental attention. Third, affected borrowers are more likely to reduce capital investment and research and development (R&D) expenditures. Given the selection of higher quality borrowers, it is particularly likely that these real investment effects on borrowers are value-destroying. Finally, we find that the market reaction to announcements of material covenant violations is 88 basis points lower among borrowers whose lenders face short-termism incentives, which suggests that the incremental attention from lenders with short-termism incentives does not improve shareholder value.

While they fall short of recommending government or regulatory intervention to maximise value-enhancing investments, the implication of their paper is clear: free markets do not optimally allocate capital. But don’t take their word for it. This paper is highly problematic for a number of reasons, outlined below.

First, they use equity analysts’ consensus earnings per share forecasts as a benchmark for short-termism, despite the highly inaccurate nature of those estimates. Indeed, those are constantly revised ; and banks are fundamentally very difficult to model due to the opacity of their balance sheet. As a result, analysts’ estimates are often wide off the mark and do not represent a reliable indicator. Sadly, the whole logic of this paper rests on this single benchmark.

Second, this paper makes rather strange assumptions about the utility of covenants in loan documentations. Covenants are usually agreed upon during the negotiations of the lending facility in order to protect the lenders by preventing the borrowers from fundamentally altering the nature of its balance sheet or of its business model. A breach of covenant is a contractual breach that is considered a serious event by the lenders as it implies a decline in asset quality. Yet this paper seems to argue that enforcing covenant is a bad thing, which ends up negatively impacting the borrower’s ability to grow in the long run.

They go as far as qualifying covenant enforcement as ‘extracting rents’ from borrowers. This is incredible: covenants are rules that are in place for a reason. Not to enforce them on a regular basis would undermine the effectiveness of those rules altogether and probably lead to much worse outcomes. Moreover, researchers qualify some of those covenant-breaching borrowers as ‘high-quality’ and ‘financially healthy’. I can assure you that, in the real world, covenant-breaching customers are anything but ‘high-quality’ and are usually flagged as ‘risky’ by bankers.

Third, even assuming their logic and methodology are correct, the effects they find is small: they calculate that borrowers affected by enforced covenant breaches are only 2.4% more likely to cut R&D spending and 4.9% more likely to cut capital expenditure. Borrowers are also only 1.4% more likely to switch lenders for their next loan and financial market reactions are marginal (88bp). Talk about a storm in a teacup.

But more importantly, my main concern is that the authors of this paper never ever benchmark their results. Or, more accurately, they benchmark the results they obtained against a hypothetical ‘social optimal’. As such, they fall in the Nirvana fallacy trap that Selgin also refers to his post: free markets are not perfect but no amount of government intervention could fix those admittedly minor shortcomings.

The second one is titled Macroeconomics of bank capital and liquidity regulations and studies the welfare effects of banking regulations. Or rather, it ‘models’ this welfare under very specific assumptions. So specific actually, that I dismissed the paper straight away.

In my view this paper exemplifies a lot that is wrong with today’s current economic research: it is based on a highly theoretical mathematical model with imbed assumptions and limitations that result in outcomes that do not nearly reflect the real world. Yet, those economists still managed to conclude that “capital and liquidity regulations generally mutually reinforce each other”, and that “the optimal regulatory mix consists of relatively high capital and liquidity requirements” (which they define as a very high 17.3% leverage ratio, more than ten percentage points above that of most banks today). They evidently conclude that their analysis provides broad support for Basel III’s regulatory framework, consequently seen as welfare-enhancing even though it doesn’t go as far as those economists would like.

Well… the one huge issue with this paper rests on this particular assumption underlying the trade-off faced by regulators in their mathematical model:

On the one hand, banking regulation may reduce the supply of credit to the economy. On the other hand, it improves credit quality and allocative efficiency. Accordingly, regulation tends to result in less, but more productive lending.

This is my reaction to this sort of nonsense:

There is not a single glimpse of reality in believing that regulation is more effective than free markets at allocating capital in the economy. If anything, as I highlighted so many times before, regulation has diverted the allocation of credit from productive uses (i.e. commercial and industrial loans) towards unproductive ones (i.e. real estate), which has been economically damaging and one of the reasons behind the financial crisis.

As a result, this paper includes some of its own conclusions in its assumptions, leading to circular reasoning: banking regulation improves allocative efficiency, therefore we need banking regulation.

Finally, Bank Capital and Dividend Externalities highlights that banks fail to ‘internalise’ the effects of dividend payments and capital policy on the stability of the wider financial system. The researchers theorise that banks increasing their dividends harm the claims that its own bank creditors have on its balance sheet in a bankruptcy scenario, thereby weakening the financial strength of the whole network. In such a system, they state that bank capital becomes a ‘public good’. The logical conclusion to this lack of systemic coordination is obviously government intervention: regulators should put dividend restriction measures in place when necessary.

But, here again, this paper suffers from major design flaws:

– Bank creditors are the only ones considered, despite the fact that banks have a multitude of creditors, including depositors. If dividend payments harm bank and other money market creditors, they also surely impact depositors and bondholders.

– They assume that dividend payments decrease the value of the bank by lowering its probability of survival. They reject the signalling theory of dividend payments despite admitting it had some backing in the literature: reducing dividend is a negative signal about the financial health of the institution.

– Their empirical evidence is limited to a couple of data points taken during the latest financial crisis: a couple of banks increased dividends before collapsing. They do not take into account the fact that those followed Bear Stearns’ bail-out by the Fed, which sent a specific TBTF signal to both the market and bankers themselves.

– As often in the banking literature, they make a big deal of interconnectedness, yet seem to forget that all industries have interconnected members. The decisions made by a large automaker also affect its whole supply chain and their employees. I have yet to see tens, if not hundreds, of research papers arguing that automakers fail to ‘internalise’ the impacts of their decisions, which are not always ‘socially optimal’.

– More damning, they prove their theory by modelling a financial system that includes just two banks, consequently suppressing any opportunity for exposure diversification. This is completely unrealistic. Banks have tens of other banking counterparties and already factor in their counterparty assessment the possibility that capital policy might change. But thanks to the diversification effect, those changes usually only affect them at the margin. A two-bank model does not capture this critical point. To be fair, those economists do admit that their model is a simplified one. Yet this admittedly weak theoretical basis does not seem to make them think twice before making policy recommendations.

– Finally, this paper falls into the same Nirvana fallacy trap as the first one reviewed above: they do not make a convincing case that an effective alternative exists, and assume away Public Choice issues by relying on the actions of omniscient regulators.

This is the sorry state of affairs in current economic research. By focusing on highly theoretical mathematical models based on very limiting – if not totally unrealistic – assumptions, damaging policy recommendations are outlined and subsequently serve as justifications for regulators’ actions. Clearly, nothing much has changed since the days of Diamond and Dybvig’s flawed model (see White, The Theory of Financial Institutions, 1999). As I recently pointed out in the case of macroprudential research, this is a reminder that it is critical to read research papers’ body (and not only their abstracts and conclusions). Sadly few people seem bothered do so.

Are we all macroprudentialists now?

Update: a revised and extended version of this post was published on Alt-M here

The title of this post (and a reference to Milton Friedman’s famous quote) is also the headline of a recent speech by Klaas Knot, President of the Netherlands Bank, and to which he answers:

In the spirit of Pentti’s thinking my answer is: Yes – as long as we stay eclectic, pragmatic and flexible. And we take the interactions of monetary and macroprudential policies into account, and coordinate the two policies.

While there is some truth to the second half of his answer – that monetary and macropru do interact, I find myself very uncomfortable with its first half: if we are all macroprudentialists now, we are heading for disaster.

As highlighted on this blog a number of times:

- There is barely any evidence that macropru has any effect (see also here and here) but on the other hand it ‘leaks’ and does have distortive impacts on the allocation of credit in the economy.

- It cannot counteract the effect of monetary policy.

- It opens the door to ‘bureaucratic tyranny’ (as John Cochrane said) and assumes away all Public Choice issues.

- It assumes omniscient regulators and rejects the conclusions of the socialist calculation debate or the insights of Hayek’s concept of knowledge dispersion.

But as research pieces presented last September at the BIS/Central Bank of Turkey seminar on macroprudential regulation demonstrate, group think is widespread: economic researchers include many, many important and explicit caveits and limitations within the core text of their papers; yet seem to suddenly ‘forget’ them once it is time to write both the abstract and the conclusion of the same papers.

Out of 19 papers, only one refers to some of the issues listed above and questions some the fundamentals behind macropru reasoning (Bálint Horváth and Wolf Wagner’s Macroprudential policies and the Lucas Critique, an interesting read). Many others, on the other hand, question the very fundamentals of a market economy: each agent fails to ‘internalise’ the damages that his/her actions have on the market and economy as a whole. Therefore, an external regulator needs to intervene in order to control the agent’s actions and stabilise the overall economy.

This is absurd. The same reasoning could be applied to any good: an agent overproducing a certain good fails to ‘internalise’ the damages it causes to the market and his industry. As a result, this industry needs a central planner to organise it in the most efficient way. We know the fallacy of this logic: government failures are worse and more systematic than market failures. Yet this view prevails in today’s macropru theoretical foundations.

This makes me think that Knot’s speech is an example of moderation in today’s central bank school of thought. Take this recent speech by Alex Brazier, Executive Director for Financial Stability Strategy at the BoE, during a financial regulation seminar at the London School of Economics. It is quite remarkable in the way that it manages to avoid referring to any of the issues listed above (and even contradicts point 2 despite the evidence), depicts macropru as an almost ideal framework, and exemplifies central bankers’ fundamental distrust in free markets.

See this statement for instance:

It’s well known, for example, that banks would choose to have too little capacity to absorb losses – too little equity capital – because their current shareholders don’t bear the full economic costs of their failure or distress. The economy needs better capitalised banks than the free market would deliver.

“Well known”? This makes little sense. This statement is a negation of all historical experiences of stable financial systems during which there was no regulator in place dictating capital requirements.

More perplexing are his later statements about capital buffers:

The results have been transformative. A system that could absorb losses of only 4% of (risk weighted) assets before the crisis now has equity of 13.5% and is on track to have overall loss absorbing capacity of around 28%.

This is wrong. As we recently saw, banks had regulatory Tier 1 ratios of 8 to 9% before the crisis. Not 4%, which was the regulatory minima. Mr Brazier is therefore comparing the pre-crisis regulatory minima to bank’s current average capital buffer.

More surprisingly, Brazier’s speech includes a whole part attempting to convince us that “clairvoyance is not a reasonable standard to be held to”, and that “[regulators’] mandate is to break free of the shackles of forecasting, to free us from trying to predict if the economy will turn down, and to apply economic analysis to the question of how bad it could be if it did.”

Macroprudential tools are discretionary policies that are supposed to be applied in a countercyclical manner: they are set in such a way to specifically reflect regulators’ forecasts (or beliefs) about where the economy – or certain segments of the economy – is going. Therefore, how on Earth is macropru supposed to work if regulators do not even attempt to understand how the economy is evolving or where the imbalances are building up in the first place? This is all very confusing.

And this is the issue. It is bewildering to see some economists, and virtually the whole central banking and bank regulatory profession, tell us that, despite all its shortcomings, unknowns, internal contradictions and inherent risks and distortions, macroprudential regulation is the way forward. (and to make things worse, macropru tools are not new and have been used for a while now, especially by emerging markets, with limited effectiveness – see chart below, taken from one of the seminar papers)

I know we apparently now live in an ‘alternative facts’ world, but academia is supposed to focus on evidence. The amount of research attempting to find a way to control the economy and financial markets through discretionary fluctuations of macroprudential tools is reminiscent of the post-WW2 Keynesian push which, as we know, didn’t end well.

Milton Friedman would have certainly not taught his students that “we are all macroprudentialists now”.

PS: thankfully Kevin Dowd is also supposed to speak at this seminar later this year, which should dispel some of the myths that are being spread

AI: regulatory arbitrage on steroids?

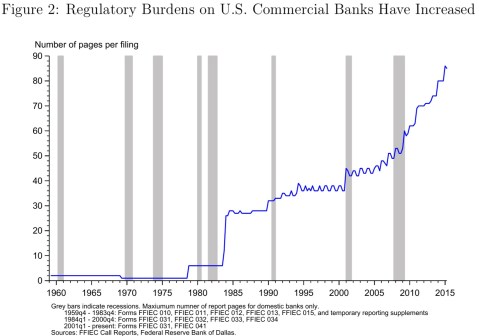

Pretty much everyone has heard of Fintech by now, but a more focused approach to applying new IT technologies to banking is now the nerdier Regtech. Regtech aims at applying Fintech for regulatory and compliance purposes, simplifying a process that has caused headaches to bankers due to the exponential growth of the rulebook they had to follow, and which has also been a pain on the cost side given the number of extra compliance officers they had to hire in an era of lower revenues.

Indeed, the FT reports that:

Citigroup estimates that the biggest banks, including JPMorgan and HSBC, have doubled the number of people they employ to handle compliance and regulation. This now costs the banking industry $270bn a year and accounts for 10 per cent of operating costs. […]

Spanish bank BBVA recently estimated that, on average, financial institutions have 10 to 15 per cent of their staff dedicated to this area. This heavy investment has been necessary in response to the crackdown by regulators that followed the financial crisis. European and US banks have paid more than $150bn in litigation and conduct charges since 2011, Citi estimated.

What’s the solution? ‘Regulatory technology’:

New technologies mean that banks could make vast savings in compliance, according to Richard Lumb, head of financial services at Accenture, who estimated that “thousands of roles” in the banks’ internal policing could be replaced by automated systems.

Many of recent Regtech developments involve the use of artificial intelligence to simplify compliance issues that are very burdensome from a staff (and cost) perspective. As Deloitte outlines here (and see an interview on the Financial Revolutionist about applying machine and deep learning to investment strategies here):

The Institute of International Finance (IIF) highlights AI, among others, as it has a range of applications in regulatory compliance and reporting. It can be used in analysing complex trading relationships, trading schemes, patterns and communications between banks, exchanges and other market participants. AI can also be employed to monitor internal conduct and communication to clients, comparing it to quantitative metrics such as supervisory input. As AI relies on computer-based modelling, scenario analysis and forecasting, it can also help banks in stress testing and risk management.

But what I find particularly interesting is this bit:

Another field for AI in financial regulations is to simplify the regulations themselves: there are a multitude of different jurisdictions, products, institutional differences and enforcement mechanisms and it is hoped that AI systems are better in collecting and categorizing them according to rules.

Similar points in an Economist article published a few months ago about Watson, IBM’s AI product:

The next area is to provide clarity about rules. They are sorted by jurisdictions, institutional divisions, products and so forth, and then further broken down between rules and guidance. Watson is getting better at categorising the various regulations and matching them with the appropriate enforcement mechanisms. Its conclusions are vetted, giving it an education that should improve its effectiveness in the future. Promontory’s experts are expected to help Watson learn. A dozen rules are now being assimilated weekly. Thousands are still to go but it is hoped the process will speed up as the system evolves. Ultimately, IBM hopes speeches by influential figures, court verdicts and other such sources will be automatically uploaded into Watson’s cloud-based brain. They can play a role in determining what regulations matter, and how they will be enforced.

Below is a useful chart showing all current Regtech areas and start-ups (you can also find it here):

While the industry has not explicitly said it this way (and probably never will), it seems to me that we’re on our way to AI-driven regulatory arbitrage. Once those systems are ready, AI will be able to navigate through the thousands of regulatory pages and extract the most effective ‘regulatory optimisation strategy’ within and across borders.

If all AI systems used by financial institutions reach the same conclusion, this could lead to a build-up of imbalances and systemic risks that could eventually trigger a crisis, following a process similar to that which contributed to the latest financial crisis: Basel rules facilitated the accumulation of imbalances in the credit market towards real estate lending.

It of course remains to be determined whether AI systems reach the same conclusions in the end. But this is likely to happen, for the following reasons: 1. banks whose systems are less effective will progressively attempt to catch up with the competition, leading to harmonisation in the design of those systems and 2. if AI solutions are provided by third-party firms, harmonisation will occur from the start.

A glimpse of hope remains in that the optimal regulatory arbitrage strategy may be different for financial institutions with different business models (mortgage banks vs. universal banks for instance). But let’s not hold our breath: even in this case, imbalances would still occur and universal banks still account for most of the world’s banking assets by far.

For now, explicit regulatory ‘optimisation’ does not seem to be included in the chart above (although the ‘Government/Legislation’ category could well evolve into a more arbitrage-oriented segment). But how long before it does?

Clarifying confusions on capital requirements

As the Trump administration is considering scrapping parts of the enormous Dodd-Frank act, a number of media and economists look alarmed: Dodd-Frank made the American banking system safer, the argument goes, and getting rid of it would lead to another financial crisis.

While long-time readers of this blog know that Dodd-Frank, and the Basel 3 international accords it is based on, merely continue the mistakes of three decades of regulatory overreach that have brought about the largest financial crisis in decades, I thought it was necessary to clarify a couple of points regarding capital requirements.

In this week’s Economist, two articles seem to admit that, while the act indeed represented an unclear regulatory monster of thousands of pages that mostly penalised smaller financial institutions, it also made the system safer by reinforcing banks’ capitalisation.

In an editorial, the newspaper asserts that:

Onerous though it is, however, the act also achieved a lot. Measures to beef up banks’ equity funding have made America’s financial system more secure. The six largest bank-holding companies in America had equity funding of less than 8% in 2007; since 2010 that figure has stood at 12-14%.

In another article, it adds:

Thanks in part to Dodd-Frank, America’s banks are far safer than they were: the ratio of the six largest banks’ tier-1 capital (chiefly equity) to risk-weighted assets, the main gauge of their strength, was a threadbare 8-9% before the crisis; since 2010 it has been 12-14%.

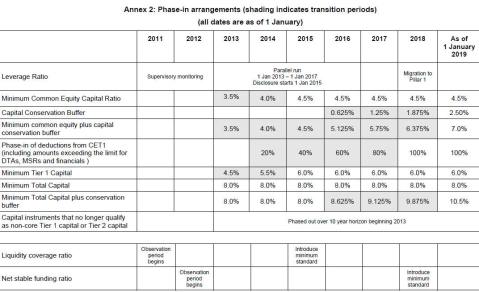

But it is far from clear that Basel requirements are behind banks’ post-crisis thicker capital buffers. See Basel 3 minimum capital requirements below:

Minimum Tier-1 capital requirements are 7.875% (Tier 1 + capital conservation buffer). This is around 2008 level for large US banks. Hardly an improvement at first glance then.

However, let’s also add the recent SIFI capital surcharge, published by the FSB last November: only two institutions qualified for a 2.5% surcharge (only one of them US-based), but let’s add it this figure to our minimum above. We get to a SIFI minimum Tier 1 requirement of 10.375%. This is still an almost 2 to 4% gap with the 12% to 14% average referred to by The Economist above.

Therefore the only conclusion is that there are other parameters and considerations influencing the level of capital ratios upward. One of those parameters is indeed regulatory-related, but is discretionary at bank-level: it is bankers’ own view about the capital buffer they believe they need above the regulatory minimum in order to avoid breaching it in case of sudden large losses. This shows some of the perverse side-effects of strict minima, and I described some time ago that the ‘effective’ capital ratio was actually the differential between the level maintained by the bank and the regulatory minimum. And this ‘effective’ buffer tends to narrow rather than thicken as minima are raised.

The second is exogenous to bank’s decision making-process: the financial crisis has taught a number of investors not to get fooled by headline regulatory capital ratios. Consequently, investors now ask for higher levels of capital in order to compensate for the lack of clarity regarding the quality of capital*. Given that risk-weights (another regulatory construct) have a considerable influence on the level of capital ratios, investors also ask for extra capital buffers to compensate for the distortions they inevitably introduce in the headline figures.

Consequently, had minimal requirements stayed the same, investors would have been highly likely to demand extra protection against the uncertainty introduced by…..those same regulatory requirements.

In the end, the assumption that banks are much better capitalised and that regulation/Dodd-Frank is responsible for this is questionable.

*While Basel 3 and Dodd-Frank have indeed also touched upon the issue of capital quality, it remains unclear how a number of so-called hybrid, or ‘complementary Tier 1’, instruments will perform under stress and legal challenges.

PS: this blog post could have entered into a lot more details about the parameters driving the thickness of capital buffers, but it would then have to be split into 3 or 4 different posts. At least. So please read some of my other posts on the topic to get the bigger picture as this is a complex issue.

Back again

For real. After almost six months without writing anything, I finally got all the green lights I needed to resume both blogging and external contributions. I still won’t have the time for more than a single blog post per week or so, but my pipeline of potential posts has grown over the past few months and should keep me busy for the foreseeable future.

To be fully honest, I really hesitated to write this post. I simply didn’t know what to say. Times are now different from when I started writing back in 2013. There is less media focus on banking crises and unconventional monetary policies. Media’s attention has shifted towards topics such as Trump, Brexit and political correctness and, following years of never ending banking reforms, booming financial markets and declining unemployment, there now seems to be less urgency to understand what went wrong with our global financial system.

Yet there is still plenty of work to do: most people, journalists and academics still believe that a deregulated financial system is inherently unstable and caused the global financial crisis that started almost a decade ago. Much like economic myths such as ‘FDR’s New Deal and WW2 saved the economy from the Great Depression’ have been blindly followed by generations of academics, allowing the ‘banking is inherently unstable’ story to settle into mainstream econ textbooks would build the foundations of the next major financial crisis.

Nevertheless, it does look like the new Trump administration will bring banking and financial regulation back in the spotlight as there is talk of repealing some of the Dodd-Frank act measures. It will be interesting to follow the evolution of this idea, for the following reasons: 1. there has never been any real deregulation of the banking system on aggregate, 2. it will trigger some international regulatory competition as other jurisdictions, such as the EU, seem unlikely to leave their own banks at a competitive disadvantage, 3. we could find out how flexible the regulatory framework of a single country is within an internationally agreed agreement such as Basel 3 (which is the basis of the Dodd-Frank act), and 4. we’ll also potentially find out the extent of regulatory capture in the American economy: let’s see how eager large banks are to evolve in a less regulated environment.

It is possible that the Trump administration will only repeal the least damaging and distortive measures of Dodd-Frank. However, if they do surprisingly succeed in repealing major reforms such as Basel 3 capital and liquidity requirements, the implications for international agreements could be huge: Basel could effectively be dead in the water.

How likely is this scenario to happen? Hard to say. My fear remains that repealing parts of the Basel 3 agreements on a unilateral basis would further complexify and opacify the current financial system and bring about massive distortions through international regulatory arbitrage.

Anyway, it’s too early to speculate so I’ll keep monitoring.

Back

Well, sort of. As some of you already know, I was in transition between two jobs this summer. I took some time off and travelled for several weeks. I drove close to 2,000 miles and saw some great mountainous, green, sunny, empty and desert landscapes as well as monuments, in Europe and America; I disconnected from the financial world and relaxed – which is something I really needed. Now I’m back and started my new job. I’m pretty excited but this also will have impacts on my other activities.

It is unclear what this is going to mean for this blog, as well as for my contributions to the Cato Institute’s Alt-m website. It’s going to take another couple of months before things get clearer. Hopefully I will be able to resume my Alt-m contributions.

What I believe is that, due to the nature of my new job, I will have less time to work on blog posts on weekdays. Therefore I am unlikely to publish more than a single blog post a week from now on; perhaps fewer. I have a few in the pipeline; so keep posted.

Oh and thank you again for your support over those past three years!

Why the money multiplier remains so low

George Selgin’s latest monetary policy primer was a very good explanation of the money multiplier in fractional reserve banking systems. He also suggested that a number of factors may be affecting the current surprisingly low level of the multiplier; a fact that prompted a number of endogenous money theorists to (wrongly) assert that the multiplier was ‘dead’.

In this post, I wish to elaborate on the reasons behind the low multiplier. And those reasons are, in my view, related to banking mechanics and regulatory dynamics.

Let’s first start with a little bit of history to put things in perspective. Some time ago, and following one of my blog posts on the topic, Levi Russel from the Farmer Hayek blog – who is much better than I am at manipulating FRED data – kindly sent me the following chart representing the M2 multiplier (‘MM’) since 1920:

As you can see, the MM also experienced a huge fall during the Great Depression. It then took about forty years for the MM to progressively get back to its pre-Depression level.

Independently of regulatory frameworks, there is a simple underlying reason behind this long recovery time: banking mechanics. As corporations, banks are subject to operating constraints that limit the short run supply of credit. Banks employ a number of bankers, analysts, risk experts and so forth that are in limited numbers and already working full time to extend loans to creditworthy customers in adequacy with the bank’s risk appetite. The client onboarding process, the analysis of his risk, as well as the negotiations of legal agreements, aren’t instantaneous. The funding process itself isn’t either: despite what endogenous money experts assert, extending new loans still require looking for additional non- central bank funding before or shortly after putting the credit line in place.

At any point in time, it is likely that banks are close to the microeconomic equilibrium ideal of having marginal revenues equal to the marginal economic costs of employing staff and retaining adequate levels of capital and liquidity, and that its managers decided not to extend credit further on purpose: additional revenues were not attractive enough to justify the costs of acquiring them.

The implication of a fall in the MM is that liquidity (under the form of bank reserves/high-powered money) is now abundant in the system relative to the amount of bank money in circulation. Liquidity cost not being an issue anymore, banks nevertheless remain subject to operational and credit risk constraints, implying that they cannot put this liquidity to work rapidly.

Indeed, this situation is amplified during a crisis, as the number of creditworthy borrowers falls and banks lay off some of their employees to offset the fall in revenues and rising loan losses. Moreover, liquidity costs also rise and banks decide to hold on to higher liquidity buffers than they used to, mechanically lowering the MM. Consequently, there is no way the MM can rapidly rise. It takes time.

And this was the mistake made by a number of economists who wrongly predicted that hyperinflation would strike in the years following the implementation of quantitative easing policies. Credit cannot mechanistically and instantaneously grow. The financial system is a source of sticky constraints and rigidities. Of course we did see periods of above average MM growth (like just before the Depression or between 1980 and 1987*). But even if those particular growth rates were applied to today’s world, it would take more than twenty years for the MM to get back to its pre-crisis level.

Some could reply that banks don’t need extra resources to invest their liquidity into government bonds. While this is true some constraints remain in place: 1. the supply of government bonds is limited, and buying large quantities of them would become uneconomical for banks’ margin as bonds yield fall towards zero; 2. only a handful of governments have top credit ratings, and this rating fall as they issue more debt; 3. banks want to diversify their portfolio and certainly do not wish to only be exposed to sovereign risk.

The description above effectively applies to banking systems free of exogenous regulations. But regulatory dynamics can dramatically hinder the money creation process and hence the return of the MM to more normal levels.

Following the 2008/9 crisis, the Western world has been quick at altering regulatory requirements despite the weak economic recovery. In the decade following the crash, Basel 3 (implemented in the US under Dodd-Frank and in the EU under CRD4) built on previous versions of the Basel framework to progressively tighten operating restrictions – thereby reducing banks’ ability to generate marginal revenues – as well as capital, liquidity and funding requirements.

This regulatory package made it even more complex for bank to engage in lending. These are some of the steps that bankers now typically have to take in order to set up a new committed credit line:

- Client onboarding/Know-Your-Customer, which is getting increasingly tightened by authorities due to international sanctions, tax evasion and terrorism

- Credit analysis/risk assessment facility type/comparison with risk appetite and internal risk management guidance

- Estimate what the regulatory liquidity (LCR) and funding (NSFR) requirements are going to be for this specific credit facility.

- Estimate the cost of getting hold of the specific liquid assets and funding instruments (which both are in limited supply on the market and hence costly to acquire) that rules require

- Estimate the amount of regulatory capital (also in limited supply) required for such a facility

- Estimate total risk-adjusted revenues of the new credit facility (plus any other revenues from this customer), deduct total costs, and compare with required regulatory capital

- If return on capital too low vs. management policy, decide whether or not to extend credit based on relationship

- Negotiate loan agreement/covenants

Those steps require human resources in relationship management, risk management, legal and treasury. As the process has been lengthened and complexified by Basel 3 in the post-crisis years, it is unsurprising that banks, already facing declining revenues and costs-cutting (i.e. staff), haven’t been able to grow their balance sheet as rapidly as bank reserves were flowing into the system. Moreover, faced with harsher capital regulations and unending litigation costs in a world of low or negative interest rates, banks found it extremely hard to find remunerative lending opportunities. Consequently, many banks have now entirely exited a number of lending products whose marginal costs have been pushed up by regulation above their marginal revenues. They have deleveraged in order to be compliant with capitalisation rules rather than raise capital to avoid diluting shareholders already suffering from zero return (therefore at risk of exiting their investment altogether). I guess I don’t have to explain that a deleveraging banking system is antithetical with a rising MM.

Finally, I shall include monetary policy in the ‘regulatory dynamics’ category, and more particularly the decision by a number of central banks to pay interests on excess reserves. It is not the purpose of this post to focus on this rather strange monetary tool; George Selgin wrote plenty of excellent posts deconstructing its rationale.

A last note however. While we’ve mostly been describing the factors influencing the supply of credit, let’s not forget to factor in the other side of the equation: demand for credit. During or following a credit crisis, borrowers often attempt to repair their balance sheets by deleveraging, affecting the demand for new loans.

In the end, it looks unsurprising to see the money multiplier remaining so low and taking decades to recover following a rapid fall. As history shows, this is a recurring fact, dictated by the day to day operating rigidities of the business of banking, and with consequences for the bank lending channel of monetary policy. Our dear multiplier isn’t dead; it is just sleeping and merely unlikely to reach pre-crisis levels for another few decades.

*Such rapid growth rate in the 1980s is probably linked to banks trying to add more remunerative lending to their portfolio as rapidly as possible. This is because, as both nominal interest rates and inflation were shooting up, banks’ margins were becoming rapidly compressed due to legacy lending extended in earlier periods of lower nominal rates.

This post was re-published on Alt-M.

Brexit regime uncertainty: some evidence

Following my latest post on the regulatory regime uncertainty caused by Brexit, evidence of the damages has started to emerge.

Unsurprisingly, and in line with the studies mentioned in my previous post, uncertainty is affecting both the demand and supply sides of credit.

On the demand side, the FT reports that

like other small British companies … longer term prospects have been altered by the EU referendum. Last month’s vote has dramatically increased uncertainty on issues ranging from regulatory standards to supply chains. […]

Other local companies have reported laying off staff, raising prices, or scaling back on investment plans, among a range of responses that also include seeking to take advantage of the weaker pound, in a survey compiled by Business West, a lobby group in the south-west of England.

This is evidently not conducive to borrowing and investments, and City AM reports that the number of M&A deals in Britain indeed significantly dropped in the first half of the year. Furthermore, the FT also reports that large British banks “expect demand for credit from businesses and households to fall as a result of post-Brexit economic uncertainty, according to a Bank of England review.”

The same article seems to show that, on the supply side, banks are for the time being only tightening commercial real estate lending given their pessimistic view of the sector (which also was a major contributor to UK banks’ losses during the financial crisis).

Finally, another FT article shows that

An index tracking sentiment in the European banking sector has reached an all-time low, even surpassing levels seen in 2012 when Mario Draghi promised the European Central Bank was prepared to do “whatever it takes” to stabilise the bloc and protect the euro.

This is likely to affect the supply of credit in medium-term across the whole of Europe as Brexit uncertainty exacerbates already-existing European banking issues. The shorter this lasts the better.

Sadly, this situation could take up to six years, according to the UK foreign minister…

Brexit: the consequences on lending

The British voted in favour of leaving the European Union at the end of last week. Whether the UK effectively leaves the EU and what sort of arrangement emerges is yet to be determined. What is certain is that the business world now finds itself in the most uncertain of political environments: no one knows what kind of ruleset is actually going to be put in place and how long this state of limbo will last.

The whole situation is likely to prove damaging for the UK economy as businesses freeze hiring and investments until they have a better understanding about the rules they’re going to have to comply with. Brexit is in effect a typical case of what Robert Higgs named ‘regime uncertainty’.

Higgs described how regulatory regime uncertainty considerably hampered private investments in the US in the 1930s, which in turn affected economic recovery. This period saw Hoover and then FDR’s New Deal make considerable changes to the US legal and regulatory frameworks. According to Higgs:

given the unparalleled outpouring of business-threatening laws, regulations, and court decisions, the oft-stated hostility of President Roosevelt and his lieutenants toward investors as a class, and the character of the antibusiness zealots who composed the strategists and administrators of the New Deal from 1935 to 1941, the political climate could hardly have failed to discourage some investors from making fresh long-term commitments … there exists a great deal of direct evidence that investors did feel extraordinarily uncertain about the future of the property-rights regime between 1935 and 1941. Historians have recorded countless statements by contemporaries to that effect; and the poll data presented earlier confirm that in the years just before the war most business executives expected substantial attenuations of private property rights ranging up to “complete economic dictatorship.

Seen in light of modern expectations framework, Higgs’ theory does make sense: businesses are unlikely to engage into activities whose legal treatment is uncertain. In one of my first ever posts I wrote that, given the uncertainty inherent to a productive process that takes time, a stable ruleset and predictable property rights treatments were fundamental features of intertemporal coordination between savers/investors and borrowers/entrepreneurs.

Stable rules provide a clear guide to entrepreneurs: constraints are known in advance allowing them to anticipate future demand and plan accordingly with the understanding that their investments are protected as long as they remain within legal boundaries. The Rule of Law – what Hayek described as a ‘meta-legal’ framework that spontaneously and progressively emerged, and which is mostly incarnated today by the Common Law – represents the most effective instance of stable rules. But even the less stable Civil Law – which is mainly comprised of what Hayek called ‘legislation’ – can represent a relatively effective legal framework as long as rules aren’t changed on a regular basis. Surprisingly however, the academic litterature on regime uncertainty remains rather thin.

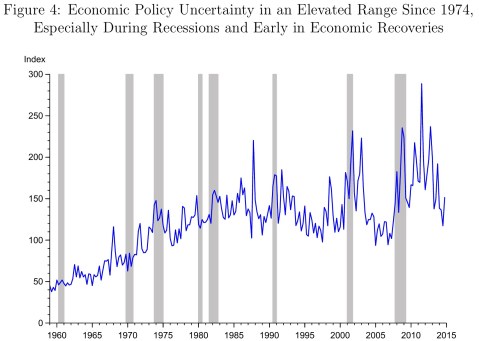

Coincidentally, a paper published earlier this year by Bordo, Duca and Koch (Economic Policy Uncertainty and the Credit Channel: Aggregate and Bank Level U.S. Evidence Over Severa Decades, also available on NBER here) adds extra empirical evidence to Higgs’ original findings. Basing their research on a recently published ‘economic policy uncertainty index’ (EPU thereafter), and controlling for other macroeconomic indicators, they look at how regime uncertainty affects bank lending in the US between 1961 and 2014. Overall, they find that

policy uncertainty significantly slows U.S. bank credit growth, consistent with it having an effect on broad loan supply and demand. We find that lagged changes in the EPU index are negatively and significantly linked to the growth rate of bank lending both at the aggregate and cross-sectional levels.

They also find that this effect is more pronounced for larger, less well-capitalised banks, as well as banks holding smaller amounts of cash reserves, and that the effect is likely amplified in Europe*:

The results have several important implications. First, statistical evidence suggests that economic policy uncertainty has affected bank lending in the U.S., which other studies have found to have important effects on economic activity and which we also find. This could have implications for Europe, where the Baker-Bloom-Davis (BBD) index of economic policy uncertainty rose more than in the U.S. during the post-crisis slump and the economies are more bank dependent. More recently, the EPU index in Europe has not recovered as quickly as in the U.S., where the subsequent recovery in bank-lending growth has been stronger as has been the overall recovery in GDP growth.

Given that London represents a substantial share of Europe’s financial activity and is the main Euro clearing centre (a situation that the ECB has fought for years), the implications for a post-Brexit Europe are clear. Domestically, the demand and supply of loans in the UK are likely to remain subdued as long as the legal framework that will apply to British banks and corporations in the future is unknown. The uncertainty is also going to hurt foreign banks, which have large operations in London thanks to the UK’s ‘passporting’ rights (which allow financial firms based in the UK to offer services throughout the EU under single market rules). Many of those institutions are unsure whether to move operations to other EU jurisdictions as nobody knows if the UK will be able to retain single market access and Euro clearing. This paralyses business-making in a period of already heightened regulatory uncertainty.

Legal uncertainty affecting the financial sector is of the worst kind given its repercussions on economic activity. It is therefore unsurprising that, following the Brexit vote, stockmarkets in the EU have fallen more than those in the UK. The announcement even triggered the worst fall in EU banks’ share price in history.

Brexit will have repercussions on lending and investments both in the UK and in the EU as long as this state of uncertainty lasts. And it can even end up being more damaging for other EU countries, already suffering from low economic growth and constantly-changing banking regulation. Of course, politicians seem unaware of this issue: some of the top ‘Leave’ campaign leaders mentioned triggering the article 50 of the Lisbon Treaty (which brings about the departure of a member of the EU) only in… 2020.

Given that it takes two years of negotiation following the trigger for a country to be formally out of the union, and that undoing EU laws while negotiating new trade deals can last many more years, it is clear that those politicians are at best – some would say unsurprisingly – completely ignorant of the damages they are making. It took Greenland, which withdrew from the pre-EU in 1985, three years to negotiate the terms of its exit with the union at a time when EU laws were not as invasive as they are now. Good luck to Europeans.

*They also question the timing of the implementation of new harsh banking regulations (i.e. Basel 3) which may have delayed the post-crisis economic recovery in their view (a point I have made in a number of posts over the years).

PS: Bordo, Duca and Koch also provide further evidence of the 1990s deregulation myth:

It wasn’t a subprime crisis

The term ‘subprime crisis’ is still widely used to describe the recent financial crisis. Yet it gives the impression that the crisis emanated from a very narrow asset class (i.e. subprime mortgage lending) that somehow managed to spread throughout the US economy and the wider world and knock down most Western economies. The narrative is that financial innovation and engineering fuelled this process by creating products like RMBS and CDOs.

On closer examination, this story cannot hold. Real estate markets boomed and fell simultaneously around the world despite no subprime lending going on in these other markets. Mortgage lenders in countries such as Spain, Ireland and the UK suffered or even collapsed when falling house prices forced them to provision huge amounts, damaging their balance sheet and ability to lend.

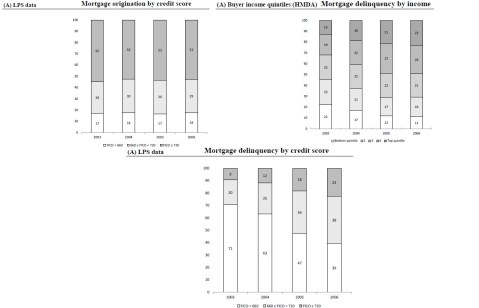

Even in the US, the crisis wasn’t triggered by subprime but by an unsustainable allocation of resources towards the entire real estate sector. New research adds further evidence to this view (Loan Origination and Defaults in the Mortgage Crisis: The Role of the Middle Class). In this paper, Adelino, Schoar and Severino demonstrate that “mortgage originations increased for borrowers across all income levels and FICO scores” and that “middle-income, high-income, and prime borrowers all sharply increased their share of delinquencies in the crisis.” This conclusion is in stark contrast with that of Mian and Sufi’s famous 2009 article (which they reformulated in their book House of Debt), which had fuelled the theory of a subprime origin to the crisis (I had already voiced doubts about their theory here).

More precisely, Adelina et al find that credit flowed towards all sorts of borrowers in the years preceding the crisis, and not just disproportionately towards subprime ones:

between 2002 and 2006 mortgage origination increased for borrowers across the whole income distribution, not just for low-income or subprime borrowers. In line with previous years, the majority of new mortgages by value were originated to middle-class and high-income segments of the population even at the peak of the boom. Similarly, the share of originations to subprime borrowers (those with a credit score below 660) relative to high credit score borrowers remained stable across the pre-crisis period. Although the pace of origination rose in low-income ZIP codes, this increase did not translate into significant changes in the overall distribution of credit, given that it started from a low base (borrowers in low-income and subprime ZIP codes obtain fewer and significantly smaller mortgages on average).

They also find that non-performing loans rose across the board, implying that losses were triggered by all sorts of real estate loans:

We show that the share of mortgage dollars in delinquency stemming from the lowest income groups decreased during the financial crisis. In contrast, middle- and high-income borrowers constituted a larger share of mortgage dollars in delinquency than in any prior year. The magnitudes are large: for the 2003 mortgage cohort, the top quintile of the income distribution constituted only 13% of mortgage dollars in delinquency three years later, whereas for the 2006 cohort, the top income quintile made up 23% of the delinquencies three years out. In contrast, over the same period, the contribution to delinquencies from the ZIP codes in the lowest 20% of the income distribution fell from 22% to only 11%.

We find a similar pattern when we look at credit scores: the share of mortgage defaults from borrowers with high credit scores increased during the crisis, whereas the share for subprime borrowers dropped.

Adelina et al therefore provide evidence that links the US real estate crisis with that of other countries: same roots, same consequences. The US did not experience a crisis because of a sudden and sharp increase in subprime borrowing. Subprime merely was a bystander; a symptom of deeper economic problems. Rather, the US experienced the same sort of crisis as its European counterparts: an overall debt-fuelled rise in real estate prices.

And this makes perfect sense: I keep emphasising the role played by Basel’s capital regulation in inflating the housing bubble. Low capital requirements on real estate loans provided bankers an incentive to maximise profitability within regulatory boundaries, directing the flow of credit towards housing, inflating the bubble. A purely subprime story with relatively low or no effect on other types of borrowers would not really fit this theory and would not match the experience of other countries.

Do not get me wrong though: subprime lending probably amplified the losses that some banks experienced, and did spread the crisis abroad to some extent. While the German real estate market remained roughly stable throughout the period, a number of German Landesbanks that had invested in tranches of structured products based on US subprime or near-subprime mortgages did suffer quite badly when the market price of those products radically fell.

Another very recent publication (The effect of bank shocks on firm-level and aggregate investment, by Amador and Nagengast) looks at what happened to lending and investment in the Portuguese economy following bank shocks during the 2005 to 2013 period. While I am not aware of similarly-structured studies of bank shocks in the US economy, this paper does seem to be in line with empirical results obtained by other researchers focusing on countries as diverse as Japan, Germany and emerging markets. They found that

credit supply shocks have a strong impact on firm-level investment in the Portuguese economy over and above aggregate demand conditions and firm-specific investment opportunities. In addition, we also consider how the effect of credit supply shocks on investment varies with the capital structure and size of firms. We find that firms with access to alternative financing sources are generally less vulnerable to the adverse effect of bank shocks on investment and partially manage to offset their shortfall of bank credit by increasing their financing from other sources. Larger firms also appear to be in a better position to cope with the unfavourable effects of bank shocks mainly since their banks do not curtail their credit supply as much as for small firms.

Those results look unsurprising to me and surely amplified by Basel rules that stipulate that small firms require proportionally more capital than large ones (a requirement that is hardly justified).

But combined with the empirical evidence provided above by Adelina et al, they allow me to reiterate my doubts regarding the claim that NGDP targeting would have merely led to a mild recession. This is a view of the crisis that some market monetarists accept, and which was summarised by Beckworth and Ponnuru in an NYT column earlier this year:

In retrospect, economists have concluded that a recession began in December 2007. But this recession started very mildly. Through early 2008, even as investors kept pulling money out of the shadow banks, key economic indicators such as inflation and nominal spending — the total amount of dollars being spent throughout the economy — barely budged. It looked as if the economy would be relatively unscathed, as many forecasters were saying at the time. The problem was manageable: According to Gary Gorton, an economist at Yale, roughly 6 percent of banking assets were tied to subprime mortgages in 2007.

I wrote elsewhere why I believe this view is inaccurate. But this new evidence provided by Adelina et al adds strength to my previous arguments by showing that the crisis was a full-scale real estate collapse rather than a mere and ‘manageable’ subprime-focused crisis. It should also make us think twice about the ability of NGDP targeting to cope with a situation during which banks’ balance sheets are highly damaged, leading to reduced lending and aggregate private investments throughout the economy.

That said, I do view NGDPT as a much better alternative to our current monetary arrangement. While it could potentially have alleviated the worst symptoms of the crash, I believe it is quite a stretch to think it could have led to a merely ‘mild’ recession. Please bear in mind however, that my reasoning only applies within the current institutional constraints on the implementation of monetary policy.

Were those constraints lifted, NGDP targeting could be more effective in stimulating the economy post-crash. Whether this is desirable is another issue altogether, and I tend to adhere to Salter and Cachanosky’s view that the composition of NGDP also matters*. After all, NGDP growth was roughly stable for the decade preceding the crisis, yet hid some unsustainable allocation of resources. Consequently, it seems to me that distortionary regulatory frameworks limit the effectiveness of a stable NGDP path. But would we even need NGDP targeting in a free market?

*As a side note, an interesting paper published last year seems to provide some evidence of the distortionary effect on relative prices of the usual monetary injection channel of monetary policy (i.e. Cantillon effect). This is only a lab experiment, but its conclusions are clear:

Although the theoretical model predicts, in line with mainstream economics, that the process of monetary injection is irrelevant and neutral, the experiment shows that credit expansion exerts a significant distortionary effect on resource allocation. Credit expansion also has a redistributive effect across subjects in favor of those who have a high consumption preference for the good whose production is stimulated by credit. The allocative effect of credit expansion comes from the fact that the increase in money is injected into the credit market, whereas lump-sum transfers affect all sectors evenly.

This finding is reminiscent of the insights of Cantillon (1755), who emphasised that an increase in money primarily affects relative prices rather than all prices to the same extent because money enters the economy at a certain point. This suggests that the process of monetary injection and its economic consequences should be addressed in implementing specific monetary policy measures or, more importantly, in designing the monetary system as a whole.

PS: This is my first blog post in a while. I am currently transitioning between jobs, and am pretty busy as a result.

Recent Comments